I forgot I bought 3 avocados, they're overripe, I can't eat them, I'm sad, and now I'm learning CUDA

Concept of CUDA

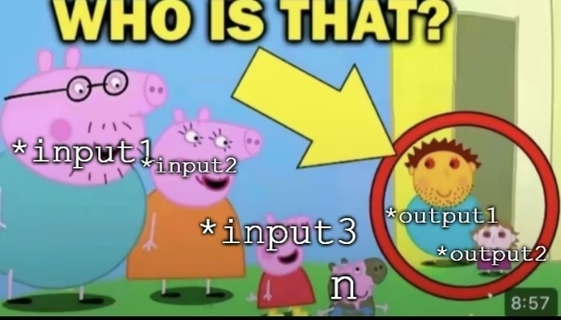

CPU and GPU have separate memories. The workflow for doing accelerated device computation is:

- Allocate memory on GPU (

cudaMalloc) - Copy input data from CPU → GPU (

cudaMemcpyHostToDevice) - Launch kernel (GPU does the work)

- Copy results from GPU → CPU (

cudaMemcpyDeviceToHost) - Free GPU memory (

cudaFree)

We call a kernel function the function that runs on a GPU. In our compiler team, we simply call this Device function. Apparently, some founding engineers decided this because it's the "central" or "core" of the computation.

We should truly never give engineers decision-making on important things, because its definition collides with 872316487 other meanings.

We define this kernel function and add this __global__ keyword to mark a function as a device function.

__global__ void vecAdd(int *a, int *b, int *c, int n) {

int i = blockIdx.x * blockDim.x + threadIdx.x;

if (i < n) {

c[i] = a[i] + b[i];

}

}

And yes, as you see, kernels don't have return values, they use output as parameters instead.

Now before we jump into the kernels, calling convention, it's better for us to know the limitations of CUDA.

Hard Limits (All CUDA GPUs)

| Resource | Max Value |

|---|---|

| Threads per block | 1024 |

| Block dimensions | $x = 1024, y = 1024, z= 64$ |

| Total Size | $xyz \leq 1024$ |

| Grid dimensions | $x = 2^{31} - 1, y = z = 2^{16} - 1$ |

Let's define it to be something like

struct Dim3 {

int x, y, z;

};

Dim3 threadIdx; // Thread index within block

Dim3 blockDim; // Block size, threads per block

// its true that:

// - (0 <= threadIdx.x < blockDim.x)

// - (0 <= threadIdx.y < blockDim.y)

// - (0 <= threadIdx.z < blockDim.z)

Dim3 blockIdx; // Block index within the grid

Dim3 gridDim; // Grid size (blocks per grid)

// its true that:

// - (0 <= blockIdx.x < gridDim.x)

// - (0 <= blockIdx.y < gridDim.y)

// - (0 <= blockIdx.z < gridDim.z)

int warpSize;

Now if you think about it, the maximum threads per launch is actually $1024 \times (2^{31} -1) \times (2^{16} - 1)^2$. But this is absurd. Actually, threads are virtual. Now, introduce the concept of SM (Streaming Multiprocessors).

🏢 Kitchen = GPU

│

├── 🔥 Stove (SM) — physical hardware, LIMITED (e.g. 68)

│ │

│ ├── 📦 Station (Block) — scheduled onto stoves

│ │ └── 🍳🍳🍳 Chefs (Threads)

│ │

│ ├── 📦 Station (Block) — multiple stations share one stove

│ │ └── 🍳🍳🍳 Chefs (Threads)

│ │

│ └── 🧊 Counter space = Shared Memory (split between stations)

│

├── 🔥 Stove (SM)

│ ├── 📦 Station...

│ └── 📦 Station...

│

└── 🔥 Stove (SM)

└── ...

// RTX 3080: 68 SMs × 16 max blocks per SM = 1088 simultaneous blocks

kernel<<<1000000, 256>>>(); // 1 million blocks launched

// only 1088 run at once

// remaining 998,912 wait in queue

| Architecture | CC | Max Threads per SM | Max Blocks per SM | Max Warps per SM | Register File per SM | Max Registers per Thread | Shared Memory per SM | Max Shared Memory per Block |

|---|---|---|---|---|---|---|---|---|

| Ada Lovelace (RTX 40xx) | 8.9 | 1,536 | 24 | 48 | 65,536 (64K) 32-bit | 255 | 100 KB | 99 KB |

| Ampere (RTX 30xx consumer / RTX Axx) | 8.6 | 1,536 | 16 | 48 | 65,536 | 255 | 100 KB | 99 KB |

| Ampere (A100 / A40 etc.) | 8.0 | 2,048 | 32 | 64 | 65,536 | 255 | 164 KB | 163 KB |

| Hopper (H100 / H200) | 9.0 | 2,048 | 32 | 64 | 65,536 | 255 | 228 KB | 227 KB |

| Blackwell (datacenter B100/B200/GB200) | 10.0 | 2,048 | 32 | 64 | 65,536 | 255 | 228 KB | 227 KB |

| Blackwell (consumer RTX 50xx series) | 12.0 | 1,536 | 32 | 48 | 65,536 | 255 | 128 KB | 99 KB |

CUDA calculates “how many blocks fit on ONE SM?” It takes the minimum of these limits:

| Limiting factor | How it’s calculated (Blackwell example) | Typical limit |

|---|---|---|

| Hardware max blocks | 24 (consumer) or 32 (datacenter) | 24 or 32 |

| Shared memory | floor( sharedMemPerSM / (sharedPerBlock + 1 KB overhead) ) | e.g. 228 KB / 10 KB = 22 blocks |

| Registers | floor( 65,536 registers / registers per block ) | varies |

| Threads | floor( 1536 or 2048 threads / threads per block ) | varies |

The shared memory you requested is often the one that decides the final number.

| Keyword | Meaning |

|---|---|

__global__ | Callable from host, runs on device (the kernel) |

__device__ | Callable from device only, runs on device (helper function) |

__host__ | Callable from host, runs on host (normal CPU function) |

| Keyword | Scope | Lifetime | Speed |

|---|---|---|---|

__shared__ | Block | Block | Fast |

__constant__ | All threads (read-only) | Application | Fast (cached) |

__device__ | All threads (global) | Application | Slow |

| (no qualifier) | Thread (register/local) | Thread | Fastest |

| Keyword/Function | What It Does |

|---|---|

__syncthreads() | Barrier — all threads in block must reach this point |

__syncwarp(mask) | Barrier for threads within a warp |

__threadfence() | Ensures memory writes visible to all threads (device) |

__threadfence_block() | Same but within block only |

__threadfence_system() | Visible to host + all devices |

__constant__ int TABLE[64]; // global scope

__device__ int helper(int x) { return x*2; } // device-only function

__global__ void kernel(int *d_data, int n) { // THE kernel

__shared__ float cache[256]; // block-local fast mem

int i = blockIdx.x * blockDim.x + threadIdx.x; // built-ins

cache[threadIdx.x] = d_data[i];

__syncthreads(); // sync

atomicAdd(&d_data[0], 1); // atomic

unsigned mask = __ballot_sync(0xFFFFFFFF, i < n); // warp vote

}